You don’t have to be plugged into the manufacturing space for very long before you hear the terms “computer vision” and “machine learning.” Both technologies are playing a big part in the advances going on in manufacturing, but at Instrumental, we believe that machine learning techniques can dramatically improve upon the traditional computer vision techniques used today. In this article, we’ll focus on a couple realistic cases to demonstrate the practical implementations of these two technologies, and how they can benefit you on the assembly line.

From Human Vision to Computer Vision

In many factories, inspecting units involves laying down a transparency with physically-drawn lines onto a computer screen, and having a human operator attempt to tell if the unit falls within these guidelines. Traditional computer vision automates and improves upon this process by relying on a “list” of human-constructed rules for it to check and follow. As an example, consider a product with a thin cosmetic gap. There’s a little margin of error, but a gap that’s too big or too small will ruin the product’s aesthetic. A computer vision algorithm can include parameters for edge detection — where the gap should be and what the maximum and minimum allowable size are — enabling a computer to determine if the unit is within specification or not. For other applications on the same product, you would need to specify and to write separate algorithms. During this process, you would need to have foresight into all the different ways those features could appear, and train the algorithm with a set of golden and failing units.

“A computer vision algorithm can include parameters for edge detection — where the gap should be and what the maximum and minimum allowable size are — enabling a computer to determine if the unit is within specification or not.”

Computer vision has several benefits on the assembly line. With modern programs, automatic inspection of units using computer vision means that more mistakes are caught before units ramp into mass production. Part orientation, presence detection, part dimensions, and angles of part geometries can be checked according to your specifications. If your computer vision program finds errors more quickly than human operators can, this translates into less time and resources wasted in the factory.

Machine Learning

The Achilles’ heel of computer vision, however, is the rules-based system it must follow, which makes foreseeing future issues highly dependent on past mistakes. If a specific hypothetical product defect isn’t included in the rules, or if new changes to a design haven’t been taken into account, then computer vision can give false passes and false negatives.

In contrast, consider a standard machine learning machine model, where a dataset is used to “train” the model instead of telling the algorithm what rules it should follow. Specifically, the algorithm uses a set of training data that contains the “result” you want, and uses it to build a model that creates the rules. This means that a machine learning model intended to catch defective units develops its own guidelines to identify defects, even if the specific type of defect was not trained. And let’s face it — as an engineer, it’s the defects we didn’t anticipate that are some of the most important to catch and to understand.

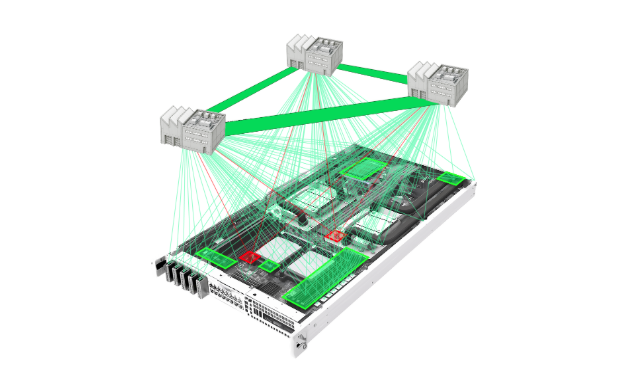

Instrumental has taken traditional machine learning a step further. Our anomaly detection software, Detect, leverages Convolutional Neural Networks and unsupervised learning to train the model as it is exposed to new units from the factory line. Unsupervised learning typically requires large training sets to work, with minimum sample thresholds set as large as 100K or 1M images; Instrumental Detect, however, starts working after seeing only 30 images. Most importantly, no Golden Unit is required.

Examples

Let’s compare computer vision and machine learning on the assembly line with a couple realistic scenarios:

1) Measuring Gaps

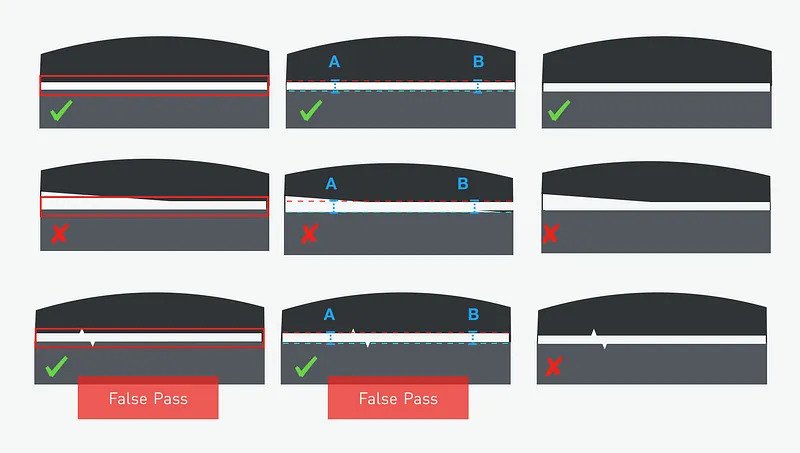

Gap measurement is a canonical use case for computer vision. A standard off-the-shelf algorithm finds edges, compares to an overlay, and gives feedback as to whether the distance between edges exceeds the allowable overlay. Custom computer vision algorithms use Hough lines or another common method to find product edges and to measure between them. They can also measure the gap in different locations and enable a specification on the delta from location to location. That gap measurement and the overall delta would be the “rules” that enable the algorithm to identify defective units.

In addition to doing all the things a custom computer vision algorithm can do, a machine learning algorithm can pick up abnormalities that were unanticipated or that are difficult to write rules around, such as contamination, flashy edges, or scratches and dents.

On the first row, the product has a reasonable gap size, and correctly passes inspection for a human test, computer vision, and machine learning. On the second row, the product has a tilted gap, and correctly fails inspection by all three possible methods on the assembly line. On the third row, two small jagged edges fall within rough overlay specs given to workers on an assembly line, incorrectly passing brief human inspection. Computer vision also falsely passes this unit, as the jagged edges aren’t detected by gap testing at points A and B. Machine learning, however, detects the jagged edges as something it hasn’t seen before, and flags the unit for additional review.

2) Measuring Profiles or Organic Shapes

Organic shapes are a much tougher challenge to tackle for computer vision. When trying to see if a nonlinear shape is aligned in a desired pattern, off-the-shelf computer vision algorithms no longer cut it. Instead, customization is needed to tailor the algorithm to the specifications of your product. It’s certainly possible to use complex systems and try to wrap margins of error around shapes, but the extra customization can still fail depending on how the curvature or necessary margins of error look. In addition, each time the design or fixturing of a product changes, the computer vision algorithm must be tweaked with additional rules to correctly match new dimensions. A machine learning algorithm, on the other hand, can be trained to recognize the pattern of the organic shape, avoiding the need to create many tailored rules to attempt to approximate the allowable dimensions of the shape. As an added bonus, this algorithm works even if the product rapidly iterates through different designs.

The first row features a “good” unit, and both computer vision and machine learning models correctly pass it. The second row features a “bad” unit with a smushed side, which incorrectly passes the computer vision guidelines for the shape. Machine learning, however, correctly prevents this unit from moving forward. On the third row, computer vision passes the unit based on shape, but fails to detect a severe discoloration, resulting in another false pass. Machine learning, in comparison, can identify that although the shape is good, the discoloration does not match other units, and flags the unit for a more focused inspection.

The Future of Manufacturing

While computer vision has been used in automated inspection for decades, it is still ill-suited for many inspection scenarios — so much so that the majority of Final Vision Inspection on the assembly line is still done by trained human operators. Even with the best training and intentions, human inspectors will eventually get tired and start to make mistakes — the question is how soon.

Modern machine learning techniques, while still relatively nascent in the field of manufacturing, have the potential to reinvent automated inspection. These techniques will spot defects more like a human than a machine, but will analyze units in machine-time. With speed and self-learning capabilities, inspection will be something that isn’t just relegated to the beginning and end of the line: it will be at or after every step. With this much data, engineers will know exactly where cosmetic damage was done, which machine or operator went out of process, and when a unit should be pulled off of the line for repair. This kind of data is key to creating the insights required to build future assembly lines that are self-analyzing and self-correcting.

Don’t just take our word for it; request a demo to see machine-learning powered insights work for you.

Related Topics