Rustem Feyzkhanov is a machine learning engineer at Instrumental. Rustem, along with the rest of the Instrumental ML team, create analytical models for manufacturing defect detection. Rustem’s particular passion about serverless infrastructure (and AI deployments on it) often leads him to contribute to notable conferences – the content below is a sneak peak of his most recent speaking engagement at the 7th Annual Global Big Data Conference.

Deep learning and machine learning have become more and more essential for many businesses, for both internal and external use. This is especially true for companies processing raw data and hoping to extract useful knowledge and actionable insights. Many are looking to adopt a serverless approach for its benefits, but implementation has challenges.

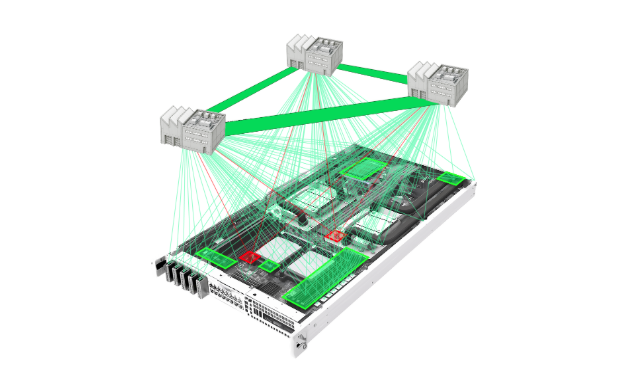

Two main challenges arise when organizing deep learning applications in the cloud: training of the deep learning model and inference (prediction) of the model at scale. The root of these challenges for deployment of deep learning applications is the maintenance of the GPU cluster. GPU clusters are usually pretty expensive and are hard to dynamically scale.

A serverless approach for deep learning training involves using fully dynamic clusters for training the models. Services like AWS Batch allow you to start a GPU cluster dynamically with as much as one VM per job. This is useful if you want to train multiple models on different sets of hyperparameters. After the job is finished, the VM will either process next or will be terminated. This sequence is key so you don’t have to pay for idle instances. Additionally, AWS Batch allows you to use spot instances, which means you can reduce your training costs by almost three times.

While training is usually a long running task that utilizes a lot of data, inference, on the other hand, is a very short task which doesn’t use a lot of data. Due to those differences, using a dynamic GPU cluster may be hard as it won’t be able to scale according to the load. The serverless approach, in this case, is to provide a simple, scalable and reliable architecture. Although single inference on the CPU takes more time than on the GPU, large batches can be processed much faster and serverless processing can scale almost instantly since everything is run independently in parallel.

An added benefit is that you don’t have to pay for unused server time. Serverless architectures have a pay-as-you-go model and it allows a business to get more processing power for the same price. Also there is no penalty for horizontal scaling when using the serverless approach, so it allows to scale to the business case limit, not the implementation limit. Additionally, it allows you to process peak loads very quickly.

The last benefit to keep in mind for the serverless approach are easy integrations between your model and other parts of AWS infrastructure. You can use your model with API Gateway to make a deep learning RESTful API, with SQS to make a deep learning pipeline and with Step Functions to make a deep learning workflow. Overall, the use of workflows and pipelines make organization easier and more complex training and inference possible – which is usually required by real business problems like A/B model testing, model updates, and frequent model retraining.

Hopefully, with these approaches for training and inference, your team can overcome the challenges of implementing serverless deep learning and start reaping the benefits.